A Tale Of Two AI Products

Apple's Intelligence versus Microsoft's Recall. They're both doing the same thing, so why did users react so differently to the two products?

We’ve had a busy month or so in the consumer-level AI space. On May 20, Microsoft announced a new AI feature that’s coming to Windows 11 in an update later this year called Recall. On June 10, Apple announced Apple Intelligence—with initials that are coincidentally “A.I.”—at its annual World Wide Developers Conference. They were both a major product announcement for their respective companies, but the aftermath of the two events were drastically different.

Basics: Recall and Intelligence

Let’s start from the beginning, though. What are Recall and Intelligence? (I’m sorry, I can’t bring myself to write Apple Intelligence every time. It’s a level of cringe I can’t muster myself to sustain.)

Both products are essentially asking to know everything that goes on in your device.

Both products are pitched as trying to make your life easier by making it easier to surface information you’ve lost in your digital world.

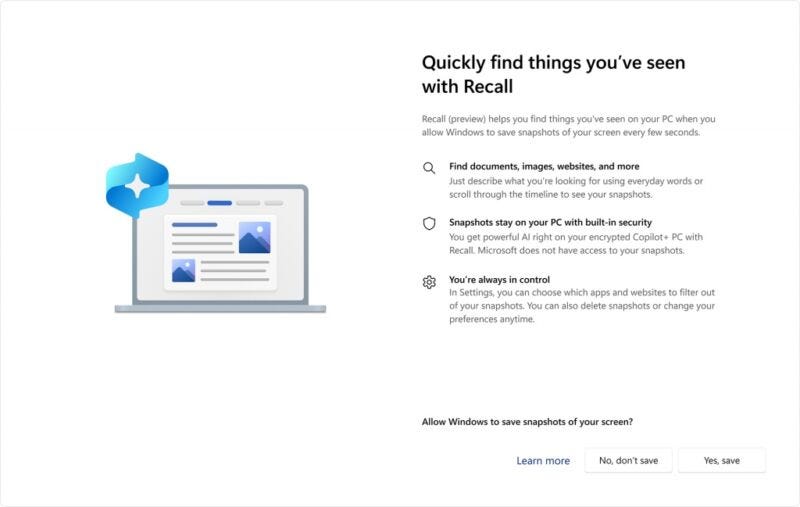

Recall is doing it by taking screenshots of your computer screen every three seconds and then locally running those screenshots through object detection and optical character recognition to make a searchable database of every word and item it can see so that you can go back and find things more easily.

Apple Intelligence is taking all of the information on your phone to train a (mostly) local machine learning model that tries to suggest useful things to you, answer your questions better, and make connections between disparate data points.

For two products with similar high-level goals, there have been vastly different reactions from the target users. What are the differences between the products and the ways that they were introduced that caused people to view them differently? And what can we learn from this about how to design machine learning tools that people actually want to use?

The Starting Point

Microsoft

Microsoft is starting from a position of low trust. In recent years, they’ve done a number of things that have either made users angry—adding ads to every spare bit of screen real estate in an operating system they already charge money for, making it nearly impossible to use their operating system without signing up for an online account, sending off boatloads of telemetry data without being upfront about it, and changing people’ settings after updates in a way that usually ends up giving them access to more data than users wanted them to have.

Additionally, within the greater Microsoft corporation, the cloud infrastructure product has repeatedly had security breaches with one happening in the last few months from a suspected state actor. Sure, most people don’t know anything about that, but the users who are the most concerned and therefore most vocal about security issues did know that, and they’ve been the ones leading the charge against Recall.

Apple

Apple has made privacy a part of their marketing pitch for years. While they’ve been no stranger to making people angry because of their walled-garden approach to…everything, their reluctance to allow users to repair their own devices, and the general penchant for extracting every single penny out of a user that they can, there’s really only been two major privacy issues that I can remember in the past decade.

The first was the iCloud celebrity nudes leak, which was the result of a phishing attack, and the second was one that many iPhone users probably never caught wind of, which was Apple’s ill-fated CSAM scanning program that was eventually scrapped after intense uproar. Outside of those events, Apple has generally had a positive reputation for respecting and even strengthening the security and privacy of its devices against bad actors of all kinds.

Apple has also been talking about machine learning on their devices for years now. ML is a kind of technology that enables AI, and powers the suggested locations in Maps, the suggested apps and widgets on the home screen, and the time to leave popups that already exist on the iPhone. Users, in some way, are familiar with a type of AI on their devices already, even if they’re not Siri users.

The Rollout

Microsoft

Microsoft said all the right things in their announcement of the product. They led with a story about the product manager using Recall to help find every blue dress that she had seen in her search for the perfect one for her grandmother. She was able to tell Recall to show her “blue pantsuit with sequin lace from abuelita” and it filtered down to…exactly that. Not only was it able to pull up tabs that she had opened and closed without saving, it was able to pull a specific message from Discord that contained the pantsuit she was looking for.

In professional settings, the product manager showed off how even if you just remembered a small detail about a file you were looking for—in this case, that there was a chart with purple writing on it—Recall could take that input and pull up the file that contained it. No more trying to remember if the file had come to you through email or Teams or was demoed on a video call. No matter where it was seen, Recall had a copy of it. Recall’s use case had now been established, and the features were pretty impressive.

Next, they told us that it was encrypted, it was secure and only accessible by the user profile whose data it contained, and that because it was stored and processed locally, it was as secure as it could be, assuming the baddies didn’t get their hands on your computer and log into it. And on top of that, the feature would only work on the brand new Windows laptops that had specific hardware that allowed it to run, so if you didn’t want it, you could just not buy a computer that could run it.

The problem is that none of that was true. Shortly after the release of the developer software, security researchers determined that:

You could run Recall on allegedly unsupported hardware

The data could be accessed remotely (and they built a tool that did just that)

Any user with admin rights on the computer could access anyone else’s Recall screenshots and database

The database was just a plaintext SQLite database, so essentially an extremely large Excel spreadsheet that’s designed to be easy to search

The database and screenshots were only encrypted at rest, which meant that once you logged into your computer, the database and screenshots were exactly as hard to open as any other file on your computer, and it’s stored in an easy-to-access folder as well.

Putting all that aside, when the feature was just announced and believed to be secure, many people thought it was just creepy. Microsoft, predictably, planned on the feature being turned on by default with an option to turn it off later, which is called an opt-out feature. That didn’t win them any points, especially given their penchant for changing people’s settings after OS updates.

People thought it was creepy that there was a searchable screenshot gallery of everything you had done on your computer for the past three months. You could exclude chosen websites and applications from having screenshots taken of them, but by default, it was going to include everything.

Any passwords it happened to capture, or HIPAA-covered information that was on the screen, or evidence of petty treason that you happened to be committing all had a searchable record now. And closing the tab, deleting the files, and archiving the emails that were captured in the screenshots did nothing to erase the data that was in the database. So as people thought through the implications of constant monitoring, they found more and more situations that they were uncomfortable with.

Now add back in the security concerns, and even non-technical people were starting to get upset. The furor rose to such a level that Microsoft had to walk the feature back some. They promised that it would now be implemented in an opt-in system, which meant that it would be off by default, but people could turn it on if they wanted. They also promised to put better security around the database itself, requiring that a user had to use one of the Windows Hello authentication methods to decrypt the database and the screenshots.

It’s certainly a start, but the trust that was broken by trying to force adoption, not actually protecting the data the way they indicated that they had, and seeming to not actually be aware of the size of the gaping security hole they had left in the feature has left a long-lasting sour taste in many people’s mouths.

Apple

Apple’s Intelligence feature, however, was introduced with security as the first feature. Like Recall, Intelligence is done with on-device processors—in most cases. When that’s not possible, the necessary data will be sent to one of Apple’s servers with a feature called Private Cloud Compute, which alleges that it is encrypted at least as securely as it would be on-device, and that researchers can verify the encryption because there will be publicly available logging. Before diving into the meat of the features of this new product, the keynote touched on security first, which I think was an attempt to reassure users that it was a feature they could feel safe using and keep them interested long enough to show them what the feature could actually do for them.

While the emphasis was on privacy straight-away, there were few details to be had about what circumstances would cause a device to reach out for computer time on the cloud server, or whether features requiring the cloud compute could be turned off. We still need more details about this, and as the software betas including Intelligence begin to roll out, we will assuredly get the same kind of rigorous testing from security researchers that we saw with the Recall feature.

After covering the security aspect, they talked about the hows, the whys, and the so-whats before really diving into the individual features. They sold you on the whole Intelligence package by trying to show you what outcomes you could expect: less stress when you’re picking your mom up for the airport, or when you need to leave work to get to your kid’s play—even if you never added those events to your calendar. They advertised a world in which fewer bits of information would fall through the digital cracks that lie between your email and Slack and text messages and Signal and Discord.

They explained that in order to do this, they were going to leverage everything they had access to in your phone, and create secure ways for third-party developers to do the same with their apps. After they presented the full vision, they proffered more specific features done with the local Intelligence product (including some truly awful generative AI images), before moving on to their third-party integration with OpenAI, which was the subject of much digital ink in the weeks before the announcement.

The OpenAI bit was much of the same generative AI garbage that we’ve seen in countless demos over the past couple of years. Creating a bedtime story based on your child’s interests and personality. Answering questions about what you can make for dinner with these ingredients on hand. Nothing really new there. The one feature that did seem new, however, was that you would be asked for permission to send data to OpenAI through the baked-in API every single time you attempted to use the features that required that data be sent off the device. It was another reminder that even though Apple was including a company that’s had its own trust speed bumps recently, you were still in control of who got to touch your data.

Most of the anger I’ve seen about Intelligence has surrounded the OpenAI part of it. There’s general disappointment in Apple for partnering with the company at all, given that it’s trained on copyrighted materials and shows no remorse for that. Both artists and tech people also tend to dislike generative AI features, and that’s a significant part of the OpenAI features. Apple has baked some generative functions into their own native features, and those have drawn as much ire as the OpenAI integration.

We haven’t had nearly as much time for reactions to Apple Intelligence, but based on my quick eyeball of the responses to the keynote on Mastodon’s #WWDC24 hashtag1, the floor and the ceiling of people’s views on the AI features were both higher, generally speaking. There’s still a lot of conversation about whether any of it is of any use at all, but for the people who haven’t written it off just for being AI, I think the reaction has been better.

The Benefits

Microsoft

So Microsoft started off in a worse place, botched the rollout of their big AI product, and has had to walk a lot of it back. What’s next for them?

I don’t think there will ever be a widespread adoption of Recall. Even if they fix the security issues with it, I don’t think they’ve been able to demonstrate enough value with the feature to outweigh the creepy factor. In a way, they implemented all the creepiness of Apple Intelligence, but didn’t integrate it with the rest of the operating system in a way that provided any benefit to offset the creepiness.

Can you remember the last time you were looking for something that you had done on your computer—be it interacting with a webpage or finding a file—that you couldn’t solve with existing tools like looking at the browser history or searching the file manager? Does it happen often enough for you to allow your computer to index your every move and save that data for three months? I don’t identify with someone who would need a feature like this often enough to make the security and privacy risks worth it, and I haven’t spoken to anyone else who does either.

Apple

In contrast, instead of trying to sell users on the technology it was using in Apple Intelligence, the Apple keynote was light on jargon and heavy on use cases and benefits. It’s straight out of the “how do you sell someone a pen” sales book: don’t sell me the what, sell me on the so-what. First, they led with security and privacy, and then they showed you what it could do. The way they told the story emphasized the benefits while assuring people that the costs were minimal (save the costs involved in the fact that you’ll need the top tier of the newest phone to run any of this stuff). They created common scenarios that everyone encounters regularly, and demonstrated the value of their product in those situations.

You’re telling someone who is a living embodiment of “out of sight, out of mind” that my phone can help mitigate my brain’s challenges even better? Hell yeah. Sign me up. It’s secure and none of my data is going to be used to train a giant corporation’s machine learning algorithm and I don’t have to pay any extra for it? Awesome, that’s most of my concerns taken care of. I, as a current Siri user, am going to be able to do all the things I already do with Siri and it’s going to be better at those things and be able to do even more? Sick, let’s do it.

Apple presented a value proposition that…actually demonstrates value to people (at the very least, more value than Recall). That’s a pretty good way to sell people on a product that might otherwise be scary or intimidating or creepy.

Side Notes

I think there’s also something to be said about the computer vs. mobile device difference between these two products. For people who still use computers daily, I think it’s inherently viewed as a more serious device. It’s where you do important things. It’s where you apply for a mortgage and write long emails and read documents and just generally do work. You’re accustomed to having more control over a computer than you do a phone.

Even when there’s an application that’s available on both a phone and a computer, it’s common to see the phone version of the app have a more limited feature set and more simplified layout than the computer version does. You’re the master of your domain on the computer, and because of that, you have a certain level of expectation of autonomy and control over the device. In the same way that people react differently to being filmed by CCTV cameras at the grocery store than they would if someone were to try to install cameras inside their house.2

While Apple Intelligence is coming to mobile devices and regular computers alike, most of the demos were done on mobile devices. They left most of the feature demos on computers for later in the keynote. In the beginning, you saw a purely mobile view of the AI features, taking place on a device that you likely view more as an entertainment and quick task box, rather than a big important work machine.

You’re used to the existing ML features on your phone already, so the transition to a more mature set of ML features isn’t as jarring. You also aren’t going to accidentally stumble into the folder on the operating system that holds all of the data like you can on Recall because it simply doesn’t exist that way, and even if it did, iPhone end users are securely buckled into the front end of the UI, with almost no way to access the back end of the software.

Wrapping up

We’ve had two expansive, panopticonic AI software products released in the last month. Time will tell which one is more successful, but here in the early stages of the launches, I’m putting my money on Apple’s horse. They’re almost always late to the game on major hardware and software features, but they also take the time to create a product that’s been created in parallel with the story they’re going to tell you about it. They’re almost certainly more concerned with how a product is going to be received by their users than they are about making sure it’s the most advanced, bleeding-edge technology they can deliver. Anticipating objections and concerns from their customers is likely a big reason they’ve leaned so heavily into security. And that means they’re designing for their users, which generally results in a product that’s desirable.

Except iPadOS, which received no substantial updates this year and has left me disappointed yet again.

Mastodon seems to be where the most technical folks live, so if there was an alarm bell to be rung, it would be happening there first.

Before you bring up that people are voluntarily putting cameras inside their own houses already, I want to assure you that I think that actually plays into the metaphor. There was a product out there for Macs that was actually more invasive than Recall because it was also recording audio. There were people who wanted to install it, and it was different than the Recall situation because they were personally electing to install the software of their own accord. They were still in control of the surveillance. This app eventually pivoted because there wasn’t enough demand for it because everyone else thought it was too creepy.

The new OLED is compelling coupled with the OS cross platform integration is a real step ahead.

Privacy